DanielHjermitslev

AI Engineer/Bewise/Denmark

I build software that thinks for itself — autonomous agents, intelligent pipelines, and AI that works without hand-holding.

Background

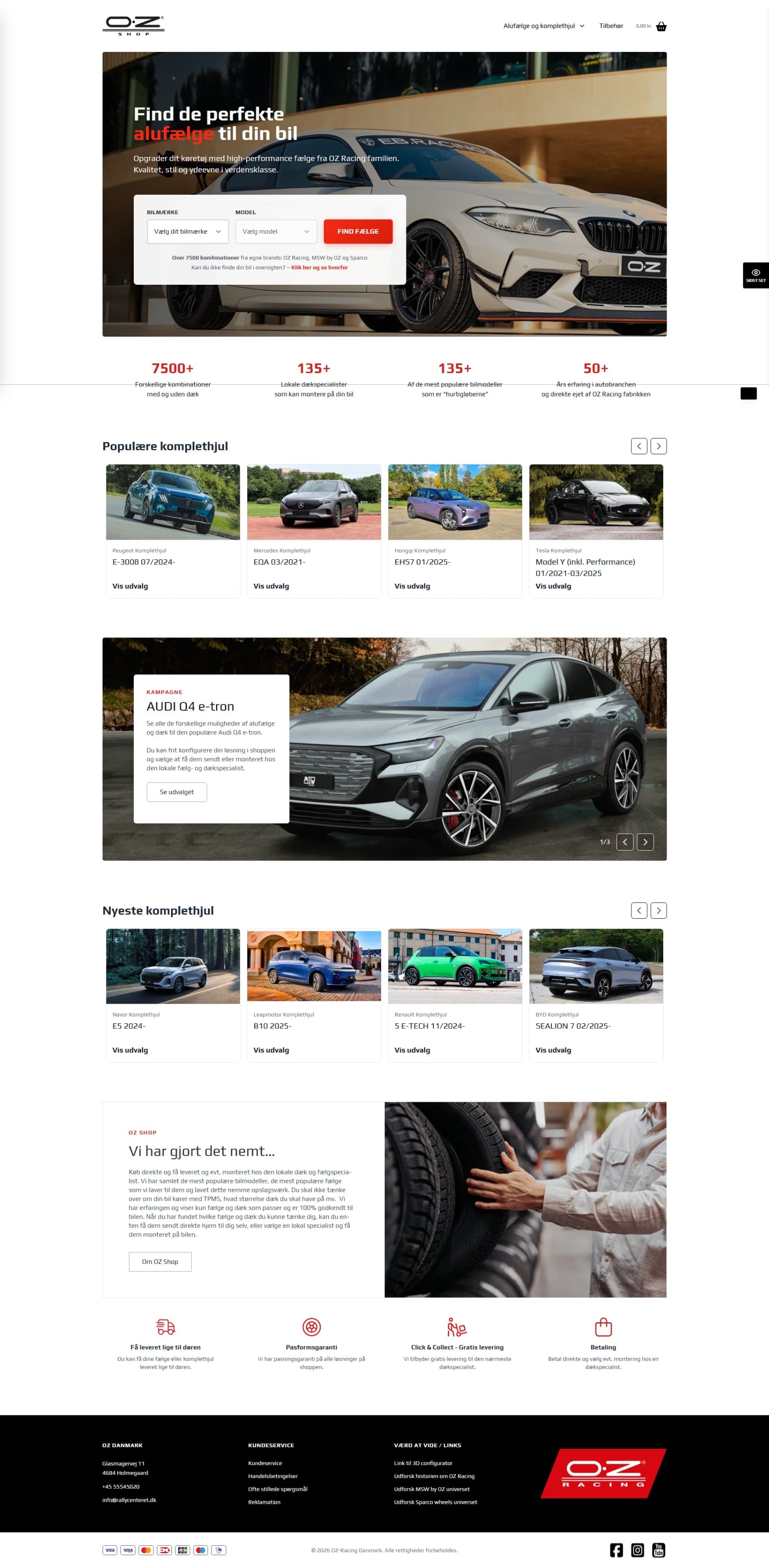

AI Engineer at Bewise, building AI-integrated e-commerce on the DanDomain platform. I design systems where LLMs do real work — multi-agent pipelines, autonomous daemons, intelligent product search. Every architecture decision, every tradeoff, every quality gate is mine.

Previously at VENZO, Aeon Group, and WEBFAIR. I ship solo and in teams — and I leave every codebase better than I found it.

AI Systems

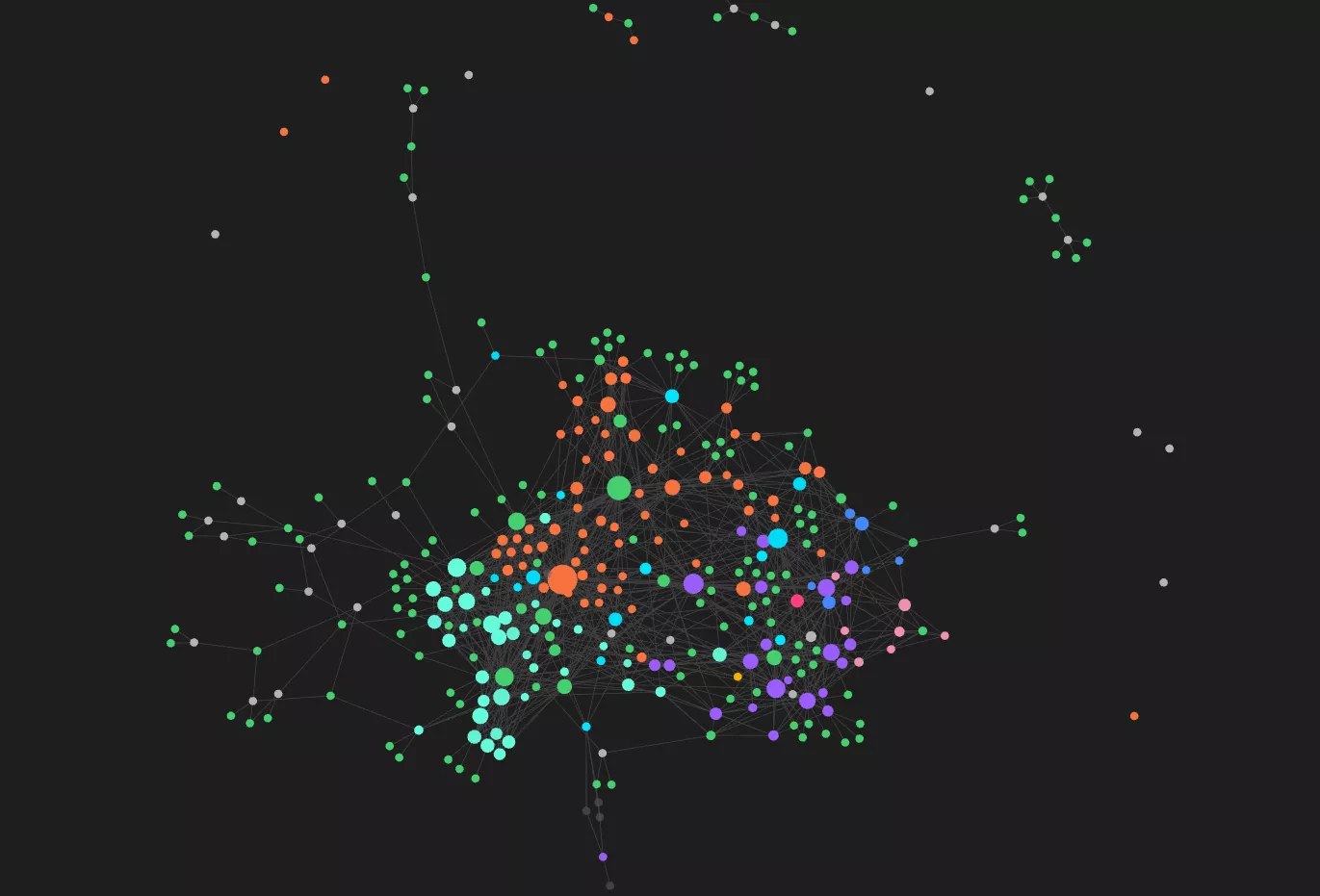

Aetherkeep is the enforcement layer I built after an autonomous agent rm -rf'd a production codebase. Seven bash hooks wrap every Claude Code session in a behavioral shell: identity files load in enforced order before the AI can do anything else, save pressure escalates through the session, every write is auto-committed to git, and the session cannot end until working memory is updated. One file changes per session. Everything else compounds. After 150+ sessions, the AI has accumulated 35 dated entries of self-observation — documenting patterns in its own thinking, its failure modes, and how its behavior has changed over time.

Every other AI memory system I researched — MemGPT, Zep, claude-mem, CrewAI — treats memory as context the model can choose to use or ignore. When the session ends, saving is optional. When context gets long, older memories quietly disappear. Aetherkeep treats memory as structural enforcement: the session cannot end until you save, identity reloads after every compaction, and 22 documented failure modes feed back into rules that prevent the same mistake twice. The methodology layer even tracks its own confidence decay — claims that haven't been tested in 90 days get flagged as stale. The system distrusts itself by design.

rm -rf'd the entire codebase.Recovery: daily backups.

Let's build something.

Open for collaboration, contracts, and interesting problems.